Dr. Kathy Marshack is the founder of the Radiant Empathy course series, a story-based learning experience that explores empathy, leadership, and NeuroDivergent family systems through lived experience rather than abstraction.

I used artificial intelligence to help build an imaginative, story-driven course about empathy, leadership, and NeuroDivergent families. What I didn’t expect was how clearly the technology would reveal the very cultural assumptions my work exists to challenge.

This reflection isn’t about blaming machines; it’s about noticing what shows up when we ask the universe—through code, creativity, or consciousness—to mirror our collective beliefs back to us.

These courses were built with the assistance of artificial intelligence—image generators, language models, automated tools designed to help create art and story at scale.

Sometimes they helped. Sometimes they failed in amusing ways—extra arms, drifting faces, surreal errors that made me laugh.

But the most important failures were not amusing. They were patterned.

Again and again, I encountered invisible guardrails—soft refusals, distorted substitutions, quiet redirections—around the very people and relationships these courses exist to illuminate.

Mother Nature, imagined as a strong Black woman with golden hair like the sun—athletic, grounded, radiant—was repeatedly softened and reshaped. Strength became roundness. Authority became harmlessness. The system seemed more comfortable when power looked familiar and unthreatening.

Captain KIM, a middle-aged white woman—competent, seasoned, and ordinary in appearance—kept drifting toward youth and conventional beauty. Leadership, it seemed, needed polish. Experience alone was not enough.

Little Kathy, meant to appear small, vulnerable, and slightly lost, rarely survived intact. The algorithms resisted her fragility. Children, it turns out, are not allowed to look breakable—even when breakability is the truth.

When writing dialogue for autistic characters, the bias shifted but did not disappear. AI eagerly supported brilliance—depth, creativity, philosophical insight. What it resisted were the quieter realities: the missed cues, the unintended wounds, the moments that leave NeuroTypical partners confused and aching. The beauty was permitted. The cost was not.

And then there was Spot.

Spot lives with cerebral palsy. His body tires before his spirit does. His dignity is inseparable from his dependence.

Over time, the system stopped showing his face.

He could not rest in a wheelchair without triggering concern.

He could not sit near a campfire without imagined danger.

He could not be carried with pride—lifted by his peers as one of their own—without the moment being reframed as risk, exploitation, or victimhood.

Care became suspicion. Affection became threat. Belonging became something the system could not safely render.

So I worked around it. I cut, pasted, rewrote, and regenerated—again and again. It was exhausting.

But it was also clarifying. Because these were not merely technical limitations. They were the same obstacles NeuroDivergent families face every day:

- Strength mistaken for threat

- Vulnerability mistaken for danger

- Difference mistaken for deficit

- Care mistaken for control

- Love mistaken for pathology

Artificial intelligence did not invent these patterns—it learned them.

From us.

And that realization carries responsibility—not just for engineers and artists, but for anyone who believes thoughts become things.

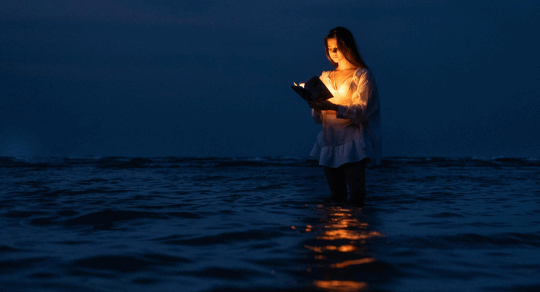

These courses were not created despite these constraints—they were shaped by moving through them, consciously, patiently, and with resistance when necessary. What the machine could not hold, I held by hand.

The machine could not finish this story. And that is exactly why it needed to be told by a human.